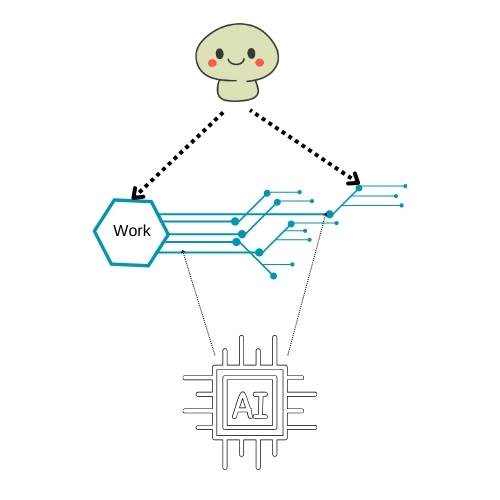

In my recent mania of doing too many things at once – training a model on latent human skills, funding applications, and building my startup[1] – I arrived at an uncomfortable conclusion, one I probably wouldn’t have reached before ChatGPT. At the heart of it are artists who feel their art and their accumulated skill are being stolen, absorbed, and redistributed by AI without acknowledgment, with the consequence of potential financial and existential harm.

The uncomfortable conclusion I came to is this: Revenue must attach to layers that AI cannot commoditize as a statistical pattern.

How AI “Steals”

I sympathize with that, but my insight today has put my restlessness about it at ease. This thought is triggered by reading comments like “AI is trained on stolen data and is copying the work of others.” “AI is taking other people’s skills and ruining their careers.” The most naive argument against this is “That is exactly what humans do, just slower”. This issue is partly legal, partly ethical, but I want a more futuristic framework to resolve it.

Large language models like ChatGPT learn by digesting enormous amounts of text/video/audio/images scraped from the internet, books, and code repositories. They predict the next word in a sequence, picking up patterns. When an artist’s signature style appears in AI‑generated content, it’s because the model has statistically absorbed and recombined patterns similar to theirs. The result feels like theft, even though it is a side effect of pattern recognition rather than intent or malice.

As a musician, I’ve wondered: how do 10-year-old children play blues music[2] that sounds as if its musical phrasing has emerged from the wisdom and experience of a man who has seen things that must not be seen? Patterns CAN be recognized and recreated without the phenomena that created those patterns in the first place. It IS a human skill, which we use liberally on each other by taking what others created through their experience and using it – in very short: someone creates a technique or a method, and others merely use it.

We don’t only fork git repositories. We fork each other.

And now we taught AI to fork us; and consequently, we get both pleasure and pain from it.

For those not familiar with GitHub, here’s why I say “fork” – forking is taking someone’s code, putting it in a sandbox, playing with it, improving it, modifying it, and often redistributing it for the better. This is how technology has been evolving. People use each other, but ultimately give back something bigger that helps the user and the used.

Personal Losses and Unexpected Gains

I am an artist, I am an author, I’ve made music, and I have written more than 1.5 million words on psychology before LLMs existed. And I am okay with the fact that AI has taken that work, learned from it, and shared it with others, even though it cost me my entire blogging career, with a daily readership of 5000 dropping to 500. It doesn’t even matter that I don’t make money on the blog or that people don’t visit, because everyone else gets easier access to a very distilled influence of what I said on this blog.

But what I gained in return was the ability to build apps, design tech, learn, and generate content for myself, which gave me back my time and a new direction for work. It gave me ready-to-use skills without the training burden that would otherwise need many lifetimes of starting over.

It is a luxury: the throughput of 20 other professionals for less than 100 dollars a month without the required professional training costs. Human + AI is a whole far, far greater than the sum of its components.

And this is true for most people. Even the designers who lost 90% of their income because free image creation using AI was good enough for 90% of their clients. However, the same designers can do far more than design because they have access to AI. Someone good at Photoshop now needs to be good at integrating Photoshop into a full pipeline and make very strong executive decisions about 10 other things to justify creative value.

The elephant in the room is this, and I see it in tech-naive people who have app ideas that assume AI can do their main processing, and they handle business. What do you ask the AI to do? Describe your request? Define a feature set? The conversion of thought into a real solution to a real problem requires highly refined human judgment. It may be artistic, but real problems remain problems because a solution is not obvious. Getting something done has become easier, but choosing highly articulate goals has just gotten harder, simply because AI can’t take responsibility and bear the risk. When execution becomes trivial, deciding what to execute becomes non-trivial. A person can only use the advantages of AI if they can get the AI to convert their motivations into a product.

Even though LLMs are accused of using unauthorized data, they gave everyone the ability to do 100s of other things at a professional level. But to use them to boost financial power, people have to be creative and purpose-driven in how they use everything they gain.

I think we must acknowledge this. Entire companies and businesses rest on AI as an infrastructure. Every future child who becomes a parent will consider this as obvious.

Win-Win Situation for human throughput

If you start counting the gains and losses per person, you’ll see that most people get more than they give back, or even pay for. And pivoting to using what they gained is a rational business decision. Some people say this means AI has democratized skill. But I believe AI has democratized skill for only those who do not have it, and given an exponentially increasing unfair advantage boost to those who have skills, and this boost takes a person’s skills and puts them in a higher layer of abstraction, where the skill directly meets other processes and other skills that generate value.

Most recent phenomenon: Anyone can set up a webapp on their local machine, but very few can ship it to production in a way that scales. Anyone can build a productivity tool by using Cursor or Claude, but very few can use all the tools to do R&D and invent something new. The floor has risen, but the universe has expanded.

AI empowers non‑programmers to code and non‑designers to create images and text. A single prompt can become a website, a logo, or a blog post. Everyone can have a business plan, a pitch, and a financial planning document without knowing how to. Skills you already have can be multiplied, and skills you never had are suddenly within reach without the hardship of acquiring them. Does this make acquiring them worthless? No, if you don’t acquire them, you just lose the unfair advantage.

Generative AI has made a scarcity-based human activity, commonly done by artistic people, into an abundant resource. This goes with the feeling, “I can do what most others can’t,” and transforms it into “What I did no longer matters”. But, I’ll restrain my analysis to the less emotional factors in this problem. The artists who lost revenue have to face a harsher reality: become asymmetrically advantaged compared to others using AI tools that make 1 person as productive as a team.

In no way am I saying people don’t suffer because of losing their source of money. But that claim needs to be revised. Problem is – rewinding the world back by 6 years to 2020 to a state before gen AI doesn’t really change the suffering. Maybe just the type of it. You are always at risk of being replaced by someone better, faster, and cheaper. Is it really such a big deal to shift the blame from a different human to AI? Our total suffering just shifts; the person or thing you blame changes, and that really is fine for most because that is a constant human experience, regardless of whatever is around.

But I do imagine shifting blame is a direct existential threat.

Human 1: At least humans screwed me over before this AI business, we suck, but it’s our nature. But now, something non-human beats us at our own game?

Human 2: Fork, this is humiliating….. we made AI so we can suck less.

This Win-Win has 1 major point of failure – AI companies restrict access or limit iterative improvements. Capitalism that ignores human alignment and decision-makers who ignore social responsibility will undermine this AI gain and devolve the whole system into exploitation and diminishing returns as a service (DRAAS™). For now, I will choose to be cautiously optimistic.

The Hard Lesson

This Win-win situation, however, is not my key insight. There is something that most seasoned entrepreneurs have learned the hard way. Given enough time, every business model will fail. The business of sticking to 1 craft, which creates a digital output for human consumption, is entering its death; it may live or be a zombie. But, make it 2 crafts, and make the output non-digital, you’ll slow its death. Make it 5 crafts, its death will be even slower.

Everyone needs to pivot their business model, whether it is a model built on their own skill or one run by other people’s skills. AI accelerates this timeline. So, diversifying your crafts becomes a way to stay alive as the market shifts.

Digital artists typically have a 1-skill 1-product business model, like selling their creations using 1 repeatable set of skills or working on projects for the repeatability of their long-term skills, such as video/photo editing, visual design, audio production, or even grant writing. Any threat to the demand for their creations and professional identity will create economic landslides. For them to resist the threat of being replaced by gen AI, their source of income has to be tied to more diverse skills or decisions that are not directly related to their past-artistic output.

Revenue must attach reliably to layers AI cannot commoditize as a statistical pattern. That may mean physical experiences, end-to-end development of multi-layered digital systems, or embedding art into environments that require constant human supervision, such that the artistic supervision itself becomes the product.

Increasing Value for Money and Time

It sucks that our past method of creation won’t be the money-bringer, but our ability to walk outside our skill and become more abstract, more system-level, and more end-to-end 1-person team-like when execution is commoditized. How we accelerate our own input into a monetizable strategy depends a lot on how the newfound advantages are used to serve a goal. A goal that is not just an output of a skill, but a more planned and reasoned-out method of creating value from skill.

And this value is not necessarily for economic productivity. I personally spend my time using AI for pure indulgence because I can now indulge in sci-fi content created by AI to my precise whims and fancies. Being able to self-indulge in unique ways is also an affordance of time given to us by this speed-up, and the ability to indulge without needing the skills is a luxury that we are just beginning to appreciate. I think to myself, “No way I could enjoy videos of aliens and space-time tearing up, and monsters made in my image, without knowing how to be a professional video and animation artist.”

A new kind of Fairness

I got a lot more than I gave. For some, maybe you don’t like what you got in return, or maybe it is not something you care about. But you still got it. Like your genetics. Your future decisions depend on what you have.

Eventually, we ALL will get access to all those incremental skill boosts and capabilities for the price of giving AI companies our creations. It’s time to change the business model that doesn’t die because of this. We must release ourselves from the limits of 1 skill-1 product business models if we are to make money from our productivity.

From a distance, the bargain looks fair: our collective creativity fed the machine; the machine feeds each of us new capabilities. We mourn lost revenue and shrinking audiences, but we gain time and the chance to explore multiple crafts. The 1-skill-1-product business model of our personal economy is fading. The new model is to USE more of what we got from indirect effects of other people’s work, build multi‑skill identities, and recognise that the AI we feel steals from us also gives us possibilities.

“The 1-skill-1-product business model is structurally fragile in a world of statistical approximation. It must expand in scope and decision-making leverage to justify artistic and intellectual value that is no longer scarce for those who execute”

The core Issue of Artistic Signatures

A logo, an artist’s signature, a brand, a name are possessions of the owner, which may or may not be sold, but are universally considered properties of the owner and are only authorized for use if permissions are granted. When AI does learn and mimic these, it is a simple problem with a very difficult solution. When humans take that property, it is stealing. When AI uses it as influence in its own creations, we have a fuzzy legal problem. Logos are easy to define. Artist signatures are not. These are the musical vibes, compositional decisions, brushstrokes, micro-design elements, etc. While logos and trademarks are easy to protect, artist signatures, their artistic fingerprints, can’t be protected easily because they aren’t easily defined. They evolve over time and are created by an artist and embedded during the artistic process, consciously or unconsciously. But one of the joys of life is appreciating that the signature is created and delivered as the artist intended. It is a fundamentally valued human experience, and I also believe it is likely a high-value AI-resistant skill. And this presents a problem to solve – how do we protect the artistic core?

Perhaps that is a very essential technological problem to solve if we have to keep the gains of gen AI. It is within imagination that a signature can be extracted and owned by the creator using the same pattern recognition that we have accelerated so much using technology. Still, in most cases, an artist’s signature is a continuous dynamic influence on their output that changes as the skill develops, so it isn’t an easy object to own or sell. And copying a signature is often a tribute, mindless emulation, or an indirect outcome of learning from that artist, directly or via an LLM. In contrast, a logo or any brand identity or brand property is quite static, and misusing that is almost always some sort of bad intention or attempt to damage someone directly. Those are separate issues. I will keep my framework limited to learning from and creating with someone else’s output that contains an artistic signature.

Still, there is a harsh truth here – for most small-time artists, their signature might not be recognizable by those who use it via the generated images. And many artists may realize their signature has not even entered AI training, nor do most users of gen AI care about their personal signature, because they have no preference, or don’t even know about it. They could be happy with just about anything that they like upon seeing.

There are corrective measures for misrepresented signatures:

- If identified in LLM output, the human chooses not to use it, out of goodwill or policy.

- The fee paid to artists as a cost-to-companies for AI learning an artist’s signature should be proportionally increased the same way humans have been forever selling things to individual students at a low cost and big business at a high cost.

- A technological solution and an industry protocol must be created to allow the artist to authorize or unauthorize the use of their signature. This sort of tech can also be a zero-knowledge proof, where no one has to even interpret what the signature is or how it was created, just to keep the mystery alive. It can be done with high-dimensional embeddings without ever putting a word to it. solvable unsolved problem.

- An artist can extract their own signature, upload it to an AI provider, and block it from the AI. This may be one of the hardest problems in reverse-engineering how neural networks process information, but it seems doable someday. Even if this becomes possible, the economic issue persists because AI learnability remains.

- A signature can be injected with a data poison that corrupts AI training or inference (sci-fi, I know).

Otherwise, we accept something uncomfortable. We let our signatures bleed into a system, and we accept that as the price paid for everything else we get and the boost to our core competencies. We can still feel hurt or damaged in some tangible way by our signatures going into a machine that doesn’t live upto our definition of “good intention”. But… That’s more of a psychological concern symbolically tied to our identity.

However, from a professional sustainability perspective, the deeper issue is this: the business model built around monetizing an artistic signature is fragile in a world where signatures can be statistically approximated. Unfortunately, for most artists, their profession involves the 1-skill 1-product model that makes their signature pretty much the biggest point of failure in business.

I consider not marrying a business model to an artistic signature (which can technically be learned but not owned or claimed by someone else) a necessary sacrifice. It worked well before, but it won’t work tomorrow. Leave money out of the signature in exchange for all the extra capabilities. Artistic signatures can be learned, so monetize the decisions made on top of artistic signatures.

An artist’s creation will evolve to be new and un-copy-able by AI at that moment, or the artist will be big enough not to worry about this problem. As for the rest… Business models die; Art does not.

P.S. At this moment, 03/03/2026, I am quite confident in this conclusion, but I do imagine my thoughts on this will continue to evolve as AI changes what it means to be human.

Sources

Hey! Thank you for reading; hope you enjoyed the article. I run Cognition Today to capture some of the most fascinating mechanisms that guide our lives. My content here is referenced and featured in NY Times, Forbes, CNET, and Entrepreneur, and many other books & research papers.

I’m am a psychology SME consultant in EdTech with a focus on AI cognition and Behavioral Engineering. I’m affiliated to myelin, an EdTech company in India as well.

I’ve studied at NIMHANS Bangalore (positive psychology), Savitribai Phule Pune University (clinical psychology), Fergusson College (BA psych), and affiliated with IIM Ahmedabad (marketing psychology). I’m currently studying Korean at Seoul National University.

I’m based in Pune, India but living in Seoul, S. Korea. Love Sci-fi, horror media; Love rock, metal, synthwave, and K-pop music; can’t whistle; can play 2 guitars at a time.